NOTE: As part of my interest in continuing to advocate for humanity in the arts and to show my support for human artists, I have included a link to a fundraiser for a wonderful initiative by

at the end of this article. If you’ve got a few spare dollars, or a story to tell, have a read.Part 1 Part 2

In 1950, Alan Turing posited a scenario that he named “the Imitation Game”1. In it, a human evaluator has a conversation via text with two other agents, which they can’t see. The evaluator knows that one of the agents that they’re talking to is a computer. The object of the game is to correctly identify which of the two agents is the computer, and which is the human.

The consequences of a machine being able to pass, or even circumvent, the Imitation Game have been a mainstay of science fiction for… I dunno, a very long time. Asimov explored it in I, Robot, Bicentennial Man and a host of others, The Matrix imagined a world where super intelligent computers are able to fool humans not about a machine’s humanity but the entire world. There are countless examples of this kind of “What if robots become as smart as us?” across the long historical arm of science fiction and horror. Hell, I’ve written a story about what would happen if a human mind in a computer was to diverge from its original state while the actual human recovered from an accident. The idea that code can escape its base layer and become truly sentient, and what that means for humanity, is a deep shared unease.

More recently, Ex Machina explicitly explored the dangers of a robot that the evaluator knows is a robot, but comes to trust more than the human agent anyway (it ends badly). What happens when we have a machine that interfaces with us as though it’s a human, but is in fact not bound by our ethics or morals or societal boundaries because why the hell would it? Westworld, in its first season at least, questioned what it meansto be a conscious being, and the first season’s finale left the viewer with a disquieting question. “how much free will, agency and consciousness did the hosts really have, and how much do humans really have?”

All of these stories are thrilling, or frightening, or force us to confront what it is that we find so disquieting about the prospect of something being as good as humans at understanding the world.

With the widespread release and dissemination of ChatGPT and other Large Language Models, I’ve realised that sci-fi movies of the past have failed to ask the right question, which is this:

“What if we invent a machine that’s as thick as a bag of hammers, but people use it anyway?”

Large Language Models and How They Do That Thing They Do

Please, if you’re a computer scientist or a linguist, look away; I’m about to grossly oversimplify the way a Large Language Model (LLM) works and annoy anyone who cares about wugs.

The difference between trying to generate an image using AI and trying to generate a sentence is that the value of an image can be approximated and still be broadly understood, while a sentence can’t.

To illustrate this, I’m going to give an example of an image that’s been manipulated. I took a photo at a swimming hole I went to last summer. Each pixel has a number between 0 and 255 attributed to it for the Red, Green, and Blue portion of that pixel. So black is (0,0,0), white is (255,255,255), and pure green is (0,255,0). Now, if I were to take my original photo and feed it through something that output the same image, but with every one of those numbers either moved up or down by one, how different would it look? You decide. One of these images is either one notch brighter or one notch darker than the other. Spot the difference.

Now, there’s a chance you might be able to see which one is darker or brighter than the other. I don’t know, your eyes are probably better than mine. I certainly can’t tell the difference personally.

But now the real question is… which one is the original?2

This is attempting to show that if you’re trying to recreate an image, you can generate something similar and have it be passable. Minor differences in numbers or arrangement may result in strange shapes or colours but the way humans process images, especially initially, has a degree of flexibility to it. This can be seen in machine generated imagery. At first blush, the pictures look like something vaguely passable, and it’s only on inspection that you notice how weird everything gets.

Now let’s do the same thing with words. Which one of these has had its ASCII characters shifted by one?

“You should really consider subscribing to this Newsletter.”

OR

Zpv!tipvme!sfbmmz!dpotjefs!tvctdsjcjoh!up!uijt!Ofxtmfuufs/

Now, I know I write a lot of nonsense, but I would hope that it’s obvious which one was my original input.

So we aren’t looking at solving the same problem when you talk about machine learning image generators vs when you talk about language models. Even slight differences render a sentence into complete nonsense.

To solve this problem, machine learning researchers, rather than relying on individual letters and approximate values, have grouped these tools into what they call “tokens”. In some cases, this will be small words and portions that make up bigger words. It solves a couple of problems. One of them is it reduces the sheer amount of storage space required, and the other is that it more closely resembles the way that humans work with language.

We don’t talk in letters. We talk in words, phrases, and sentences. The linguistic term for this is chunking, but they’re called tokens in LLMs. By making a computer evaluate this enormous pile of separate letters and sort them in to tokens, you can then turn around and start asking it to figure out what token, statistically, comes after any other token.

This is, in some way, what humans do when we’re listening to people. If you meet someone and they say “Hi,” there’s a fair chance that your brain considers the next likely phrase to be “How are you?”, and though the next word is “how”, you aren’t expecting them to follow up “Hi,” with “How tall is an elk if you measure to the shoulder?”. The phrase “how are you?” is a chunk.

This results in is phrases with distinct and discrete meanings. “Hi, how are you?” is completely different to “Hi, who are you?” despite comprising of the same letters. For an LLM to be coherent on even a basic level, it has to overcome this level of discrete meaning.

So the project of an LLM is to first generate these tokens, then process a bunch of data to understand which tokens, as a rule, come after one another, based on the tokens that have happened before. It’s a hell of a project, and it needs insane amounts of data (we’ll get to that) to be able to process it. Unfortunately, this means that the length of the input text is proportional to how long a sentence from the output model can stay coherent. The pile, which is one of the databases that LLMs use, has a median input length of only a few hundred words, which means that it’s difficult for language models trained on it to remain coherent after that threshold.

As a result, it’s only when you see something longer than a few hundred words that it really hits you. These language models aren’t thinking. They aren’t having a conversation with you, no matter how initially convincing they may be. They’re building a statistical model of what, historically, has come after a conversation like the one you’re having with it, then spitting that out back at you. It’s really important that it’s clear here, that these things do not think, and they do not make decisions, and they do not understand what they’re saying. They’re only coherent at all because they’ve built a series of semi-coherent tokens from their training data. And yeah, I suppose we need to get the next part about the training data out of the way here.

Generally, See Part 2 of this series.

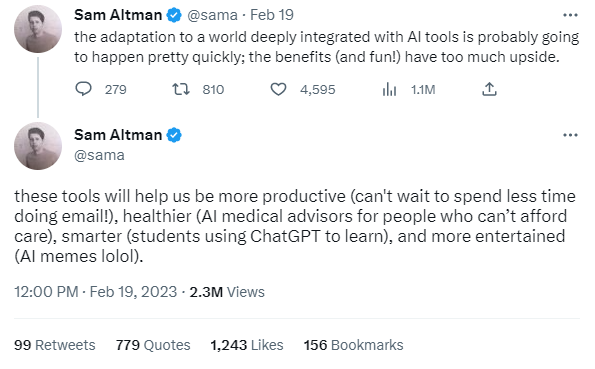

I’m not going to go into the same level of detail here as I did in part two of this series, but all the elements of devaluation of artistic work, biases in training sets and ongoing reinforcement of said biases is as true for LLMs as it was image generators. It is notable, however, that in the case of LLMs, the elements of global colonialism and exploitation have been addressed by Sam Altman, CEO of OpenAI, at least once.

He thinks it’s a good thing.

I’m not focusing on the potential for this to cause harm to people in this article; I think I’ve made that argument elsewhere. I just want it to be known that the proponents of machine learning models are not shying away from the potential fallout for the disenfranchised. Instead, that’s their rosy picture of the future. If you’re rich enough, sure! You can go and get your healthcare from a trained professional. If you aren’t, then it’s off to the chatbot with you! The fact that this largely applies to people of colour, and according to ChatGPT you need to be a lot sicker as a black person to warrant the same care as a white person, I think should ring alarm bells.

Sending students to use ChatGPT for learning is a horrific idea, as is removal of human care from medicine, or removal of decision making apparatuses. Anyone positing such a thing should not be taken seriously as a person, and should be made to answer for the harm they cause should their suggestions be implemented. The fact that Sam Altman has apparently not been chastised or made to apologise by anyone at OpenAI after saying something so openly boneheaded is an indicator of the general dearth of consequences in the tech sector while a hype train is running.

Digital Hallucinogens

One of the other things that makes LLMs uniquely unsuitable for any task that requires specific and correct answers (such as healthcare) to be provided is the tendency for them to “hallucinate”. This term is a euphemism, because most publications don’t want to use the term “make shit up”.

If you checked the link in the previous section (and I know you did. You check your sources, it’s one of your best features.) you might have noticed that the article is titled “ChatGPT is amazing. But beware its hallucinations!”. It provides a series of instances where the LLM made up bibliographic references to things that don’t exist, or get the specifics of a piece of information exactly ass-backward (note again that the article points out a racial undertone to its error). When called out, the machine regurgitates an apology, but there are a lot of people that won’t know to call it out. Imagine trying to use ChatGPT to understand your legal rights and it gives you a list of case laws that you don’t know how to look up. Or asking if a certain medication is appropriate for you. It says “yeah, sure!” and then cites a nonexistent article confirming your beliefs.

I was joking before, by the way. Nobody checks their sources 100%. I write these articles in my spare time; I make mistakes. Hell, in the previous entry I made an error on which version of Stable Diffusion had been released while I was writing it. It happens, and its why science has a robust peer review process. The problem here is that people are simply trusting the authority of the machine, and the machine is lying to them. Actually, to say it’s lying is to anthropomorphize it too much. The machine is spitting out spurious data. And even though it’s brainless, and it’s spurious and the machine doesn’t understand what’s being said, people are trusting it. It’s failing the Imitation Game, but it’s slipping through people’s fact-checking filters.

This tendency to hallucinate is already doing harm. A law professor was accused of harrassing his students on the basis of a ChatGPT reference that, as it turned out, never happened. The entire incident was fabricated. Similarly, the Mayor of an Australian town has sued OpenAI after it’s become clear that the chatbot spread a rumour about him taking bribes.

I mentioned in Part 2 about the attempts by companies such as OpenAI to box away the most harmful parts of the outputs of their model. Don’t get me wrong, it’s an admirable thing to try to do, but how do you do it for hallucinations like this? You can’t put the box around the hallucinations until after they happen. But it’ll be too late after people’s lives, professions and reputations are being affected by rumours spat out of a malfunctioning prediction machine?

Busy Work

Ok, so let’s not use ChatGPT for research. Let’s just use it to gussy up our communications. One of the things that I saw people using LLMs for early on was the ability to turn a series of dot points into a longer, more descriptive email. Another thing I saw was stories of stressed out and put upon managers using ChatGPT to reduce the length of emails they received from subordinates. I was watching this in forehead-slapping frustration but then

, crypto critic and owner of the very entertaining Web3IsGoingGreat summed it up more succinctly than I ever could.I don’t think I need to add to this, but the same basic thing seems to occur when people talk about branding strategies and similar “overviews” for guidance that has been generated for ChatGPT. All you do is tell it about the product, the core audience, the demography, its manufacture, the industry it’s used in, its approximate shape and size, materiality, your budget, your corporate brand, and ChatGPT will do the rest! Then it’s just turning the plaintext output into a full brief, transferring it to a slide deck, double checking the language, getting it reviewed, adding appropriate imagery, and… actually implementing all the things you need to.

In both these instances, and most other instances I’ve seen where LLMs are proposed as a productivity solution, the LLM amounts to an additional step that, if it provides assistance at all, it’s only as a device for organising one’s thoughts. And that’s fine - the organisation of thought is important for effective communication. My question is whether the best apparatus for this is something as unwieldy as a machine language model to do it. Sure it might be a little faster, but a notepad or a mind map works as well, and we don’t need to build a data center the size of the Cayman Islands to draw a mind map.

Empowering Data-Driven Decision Synergies

As a matter of fact, I think that in our globally connected world we need to disavow ourselves of the notion that doing something faster is axiomatically the same as doing something with more efficiency. The fact that you, worker and fact checker extraordinaire, can ask a question and receive an answer in less time than it would have taken you to research or reason through it on your own does not mean that your answer has been achieved in a more efficient manner. I’d suggest that the amount of energy being expended in the process, once you take into account the amount of time it’s taken to train these machines, absolutely dwarfs any you’d spend hunting the information yourself in all but the most extreme cases.

Data Centers use anywhere up to ten times more energy per square meter than a home or office, and the thing that enables an LLM to be as convincing as they are is an astronomical amount of digital storage and computing power. They have to be cooled, so the air conditioning systems are enormous. They have to run, which means huge arrays of graphics cards. The calibrated results need to be saved, so there’s staggering amounts of long term memory. And this needs redundancy and it needs to be accessible with ease around the world, so there’s an absurd amount of infrastructure required. That’s actual building works, taking up actual land space, and actual physical materials. In the world we live in today, I don’t think we can continue to discount the real world space that digital storage represents, especially for innovations that amount to “doing lots and lots and lots of maths”.

Machine Learning algorithms aren’t new. they were described in the 60’s and 70s, but were largely an interesting sidenote to the innovations that were going on at the time, because they were so inefficient. One of the simplest versions of a proper multilayer perceptron (something we’d now refer to as a neural network) requires 784 inputs and 10 outputs, plus however many interstitial layers as you like, and the training dataset MNIST is over 10MB in size. That’s trivial on a modern machine, but back in the 1970s it was an unimaginable amount of computation power. The Pile, the dataset I mentioned earlier, is six orders of magnitude larger than this, and that’s not taking into account anything other than the inputs. We’re talking industrial power draws here.

Even in a case where LLMs are fed by sustainable energy solutions, they’re wasteful. They can provide approximate answers for solutions that can be solved with next to no memory. I can use a computer to solve the equation 28 + 14 using four bytes of memory. It takes ChatGPT a terabyte to store an approximation of that, and it doesn’t even get it right most of the time.

The Legitimisation Cycle

In his documentaries about NFTs and the Metaverse, documentarian Dan Olson describes the way in which proponents of hype-based companies get name brand or historically well regarded institutions to buy into a new technology in order to legitimise the underlying technology. For NFTs, he used the example of billboards in Times Square, which signal to buyers caught up that the hype is real. For the metaverse, it’s a fashion show or a local branch of a chocolate company for much the same effect.

The equivalent market for LLMs appears to be fiction writing, which makes sense. Demonstrating that your product is capable of producing text that matches the quality of human creative writers is a sure-fire way to show that you are really disrupting long-standing human endeavours. Publishers of independent magazines such as Clarkesworld have been inundated with machine-generated spam recently. The end goal of these spam submissions may be monetary gain in part, but the larger project is legitimisation. As it turns out, Clarkesworld saw the chaff for what it was and promptly stopped submissions, due to being submerged with low quality content.

The most recent and ongoing story that I’m aware of is a call by Space and Time Magazine, another Science Fiction periodical, for an issue featuring “AI assisted” work. As it turned out, the project has the financial backing of a venture capitalist who has connections to AI. The picture then became clear: Producing a special issue of a real literary periodical using AI will be a real boon for this VC, as it will represent a real-world use case for AI that he can show in one hand while asking for alms in the other. It’s an investment strategy by a tech disruptor, and the quality of the work means less than the amount that can be squeezed from potential benefactors.

If this happens, you can fully expect human input into culture to quickly dry up. One of the demands by the Writer’s Guild of America was that AI not be used to generate the literary works required for film and TV, and the studios denied it. The WGA is now on strike, and who knows for how long.

This fight isn’t really between technology and tradition. It’s between, as always, capital and labour. The effort to legitimise this technology as an excuse to remove labour costs is transparent, and the mounting pressure is high. It’s not an excuse to accept inhuman work simply because it’s cheaper. We need to do better for ourselves, and for our culture.

Endgame

Large Language Models, and the technology behind them, are remarkable things from a technological standpoint. The fact that a calculus process writ large can even begin to approximate a manner of speaking that resembles human communication is extremely impressive. But…

Let’s look at what I’ve demonstrated here. The models are often incorrect and potentially socially harmful to real world people when using it as a research source, so that’s one end use out of the window. As a productivity tool it’s of limited use, as all it really does is rearrange your own thoughts and feed them back to you, obfuscated and (often enough) incorrect, which then takes more time and energy to parse. It excels at nothing and does what it does inefficiently. It uses a lot of energy that can either be put to better use, or removed from power demand entirely, reducing material and energy demand from local power grids. In the event that a suitable end use does eventuate after it gets forced into every conceivable corner by those who stand to benefit, it will be used as advertising for people with money as a reason to receive more money, and humans will be crowbarred out of that space by market forces that brag about how much better they’re making the world as they drive people in to poverty. These aren’t brilliant, intelligent machines. They’re ignorant boxes spitting out nonsense. So what, in the end, can you do with an LLM, once you’ve taken all the actual use cases that it fails at out of the way?

Spam. You can just use it to spam the ever-loving shit out of people.

Thank you for reading. If you found this insightful, I’d really appreciate it if you considered subscribing, or sharing with someone else who you think might enjoy it. If you really enjoyed it, please consider donating to me via Ko-Fi. I don’t get paid to write this, and while I enjoy doing it for passion it does take a lot of time. You can find me elsewhere on my Linktree. Until next time, cheers.

Henry Neilsen

As part of my interest in showing my support to the fiction writing community, I’d like to share the great initiative by

, an awesome indie horror press company who is creating a charity anthology called THANK YOU FOR JOINING THE ALGORITHM. They are currently fundraising for the issue at their Ko-Fi and will be open to submissions once they hit their goal. Follow their SubStack and their twitter for more details. Please note that I am in no way affiliated with the press, I just think that this initiative is great.This game is now known as “The Turing Test” in its creator’s honour.

I actually don’t know. I labelled them differently but then just drag and dropped them into the gallery, and I don’t know which one ended up where. I think that illustrates the point.